Abstract

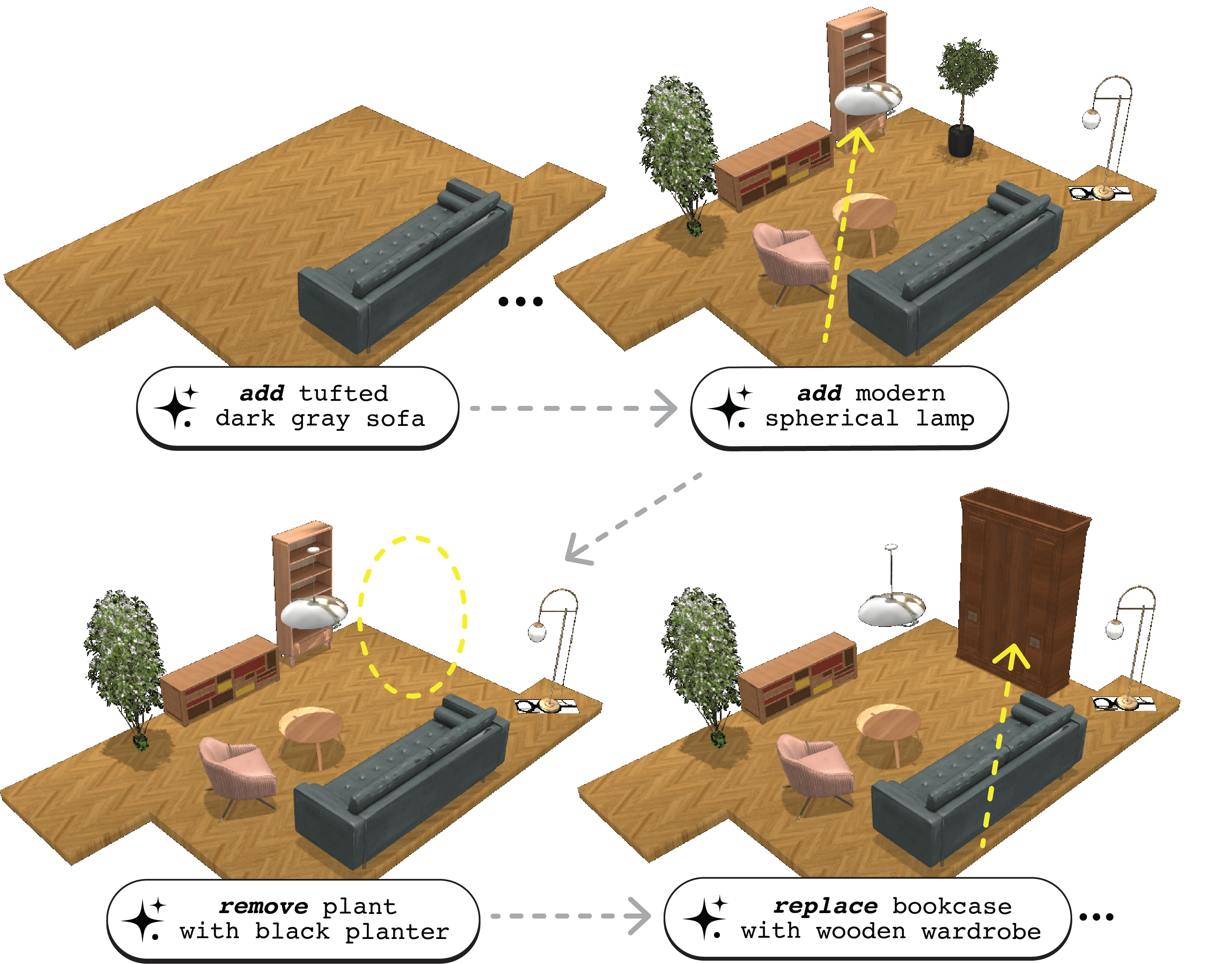

Scene synthesis and editing has emerged as a promising direction in computer graphics. Current trained approaches for 3D indoor scene generation either oversimplify object semantics through one-hot class encodings (e.g., 'chair' or 'table'), require masked diffusion for editing, ignore room boundaries, or rely on floor plan renderings that fail to capture complex layouts. LLM-based methods enable richer semantics via natural language, but lack editing functionality, are limited to rectangular layouts, or rely on weak spatial reasoning from implicit world models. We introduce ReSpace, a generative framework for autoregressive text-driven 3D indoor scene synthesis and editing. Our approach features a compact structured scene representation with explicit room boundaries that enables asset-agnostic deployment and frames scene manipulation as a next-token prediction task, supporting object addition, removal, and swapping via natural language. We employ supervised fine-tuning with a preference alignment stage to train a specialized language model for object addition that accounts for user instructions, spatial geometry, object semantics, and scene-level composition. We further introduce a voxelization-based evaluation metric capturing fine-grained geometric violations beyond 3D bounding boxes. Experiments surpass state-of-the-art on object addition and achieve superior human-perceived quality on the application of full scene synthesis, despite not being trained on it.